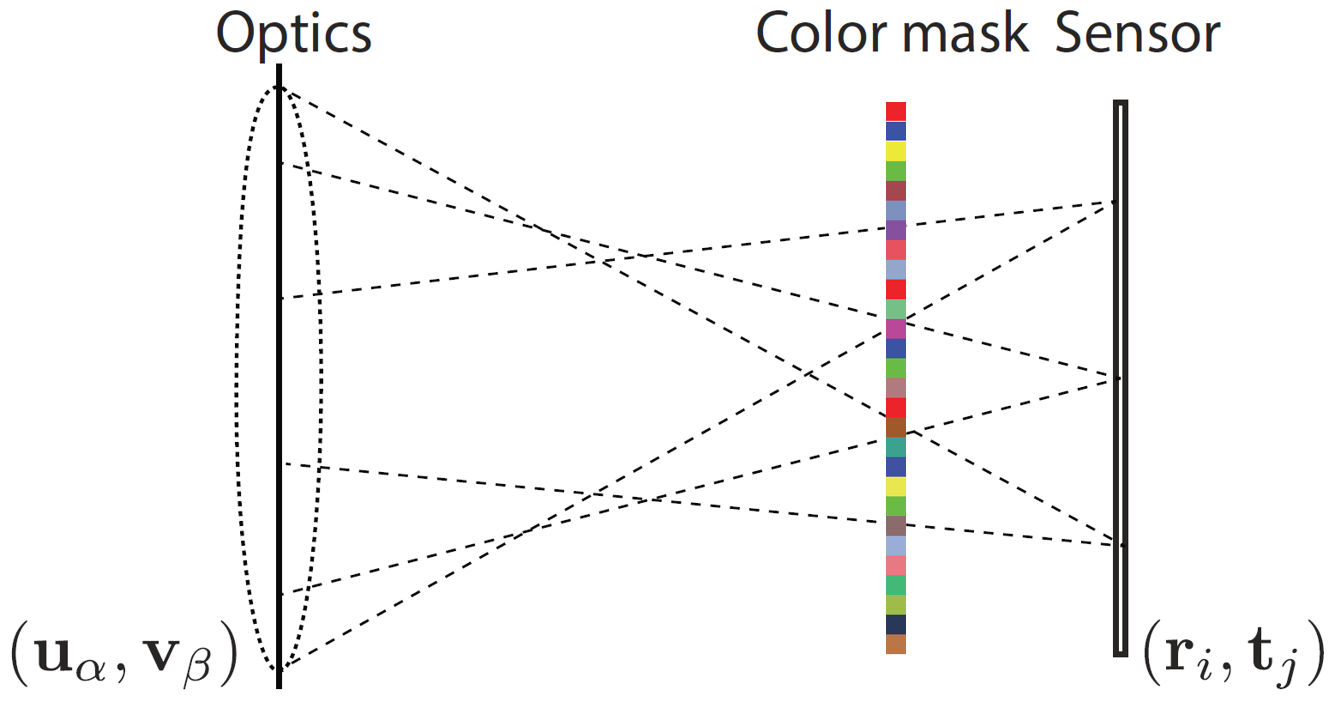

We present a compressed sensing framework for

reconstructing the full light field of a scene captured using a

single-sensor consumer camera. To achieve this, we use a color

coded mask in front of the camera sensor. To further enhance

the reconstruction quality, we propose to utilize multiple shots by

moving the mask or the sensor randomly. The compressed sensing

framework relies on a training based dictionary over a light field

data set. Numerical simulations show significant improvements

in reconstruction quality over a similar coded aperture system

for light field capture.

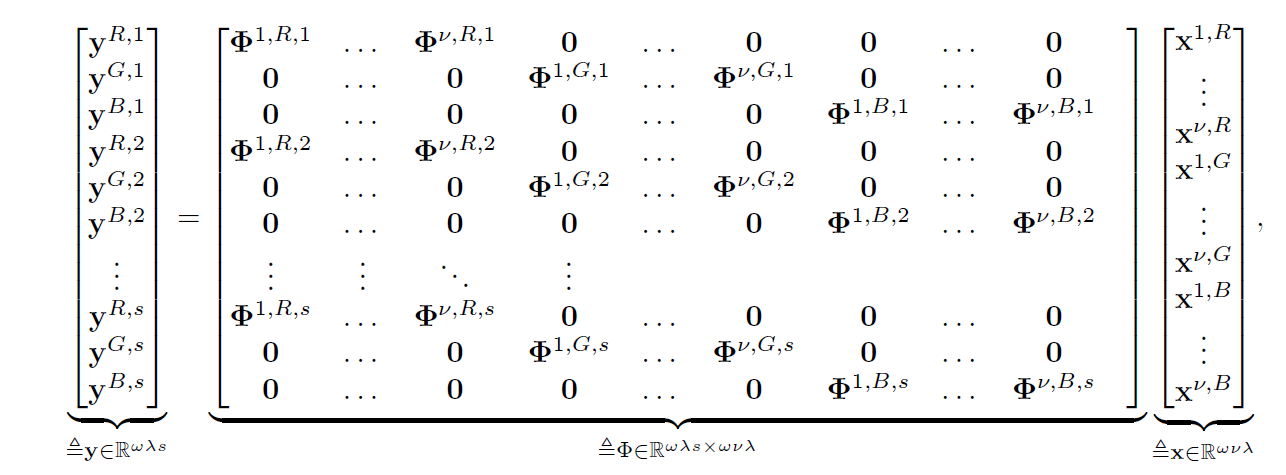

Unlike prior methods that use monochrome masks, here we use a random color mask. To further increase the measurement matrix incoherence, we use multiple shots, each with a different random colored mask. To achieve this, we propose

to use a piezo system for rapid mask movement. The sensing model with the color mask, for s shots, can therefore be formulated as

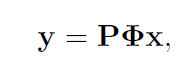

To provide

flexibility over the trade off between computational complexity

and reconstruction quality, we use spatial subsampling in the

measurement model. This can be done by a sampling matrix P as follows

with

\begin{equation}

\begin{aligned}

P \in \mathcal{R}^{r \omega \lambda s}

\end{aligned}

\end{equation}

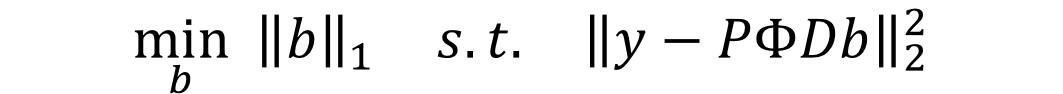

where r is the sampling ratio, $\lambda$ is the number of color channels, $\omega$ is the view spatial resolution. The light field is reconstructed by solving

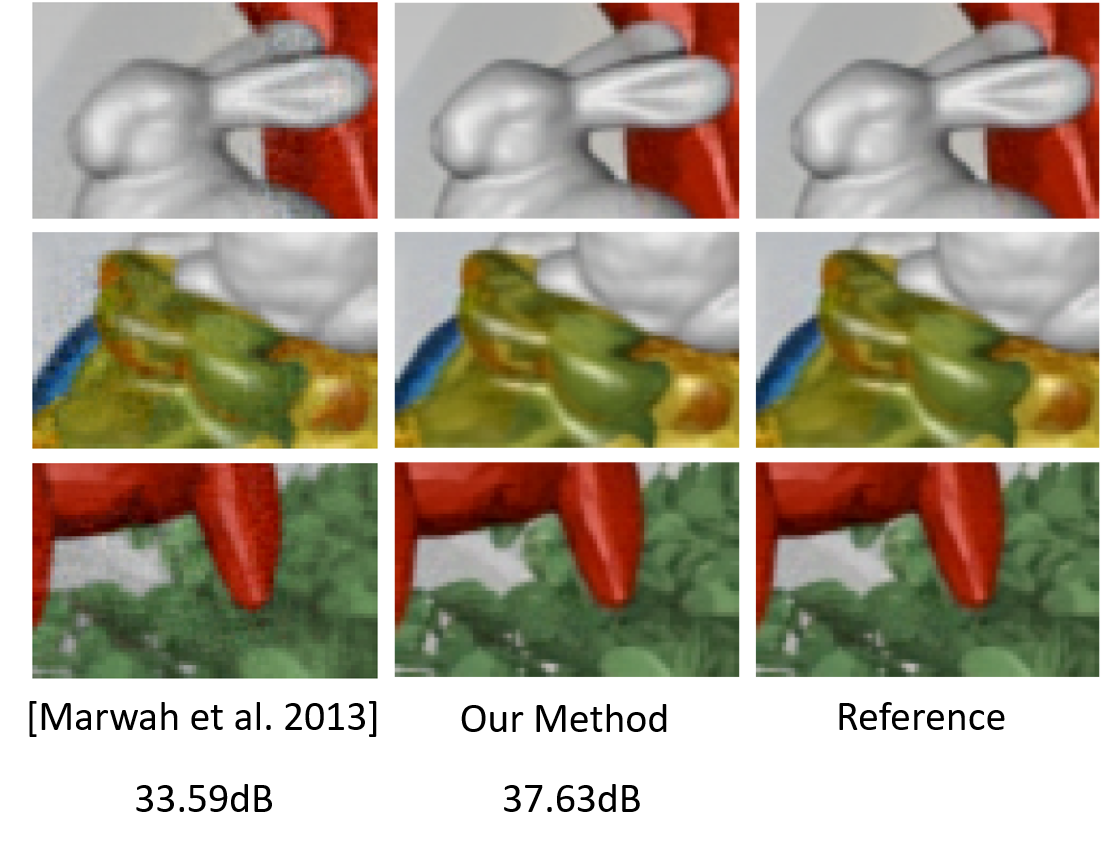

Experimental Results

The figure below shows results in comparison with the method of Marwah et al.

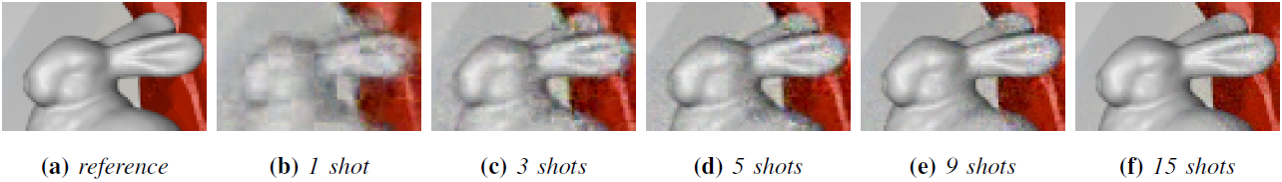

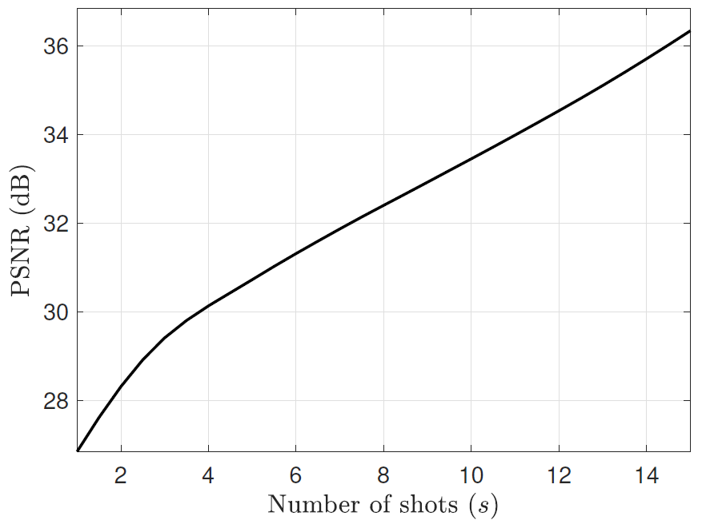

The figures below show the evolution with the number of shots of the reconstruction quality